We have last week identified three features of a learning process that can help us deal effectively with change in the complex, human, embedded systems we know as organisations. First, multiple forms of learning are involved (single, double and triple-loop learning) and often these must be used simultaneously. Second, the learning process consists of cycles of knowledge accumulation in which the learners’ understanding of the problem might change. Third, learners engage in robust explanatory reasoning. This not only delivers a more robust and sustainable solution, but also enables learning to be transferred to other situations.

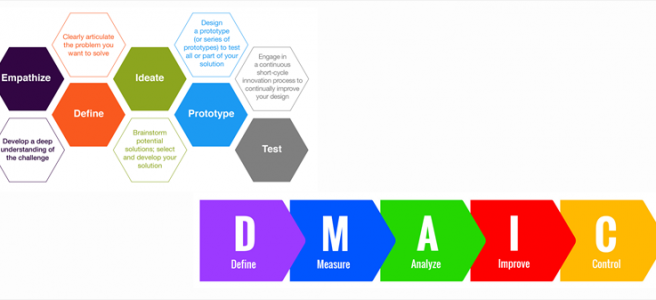

We then considered four well-known learning models (Lewin, Kotter, ADKAR and PDCA) to see how well they support these requirements. We concluded that PDCA does support an iterative process, but otherwise none of the models provide much support for the three requirements. If we broaden our scope a little and look not just at models for change, but include more generic frameworks in our consideration we might find one that does provide better support for these requirements. Readers have suggested we consider Design Thinking (thanks Mark) and DMAIC (hat tip to Frank). So, let’s take a closer look.

But before we do that, it may be worth taking a moment to consider the difference between a model and a framework. A model is generally a representation of something in the real world and therefore consists of a set of objects or concepts that are put together in a certain way for a certain purpose. Frameworks are also groups of concepts (less often objects) but both the concepts and their relationships with each other are loosely defined. This allows frameworks to be customised for application in a broader range of situations and for a broader set of purposes.

DMAIC, which looks more like a model than a framework, stands for the five steps in the sequence in which action is taken to address an issue: Design, Measure, Analyse, Implement and Control. It is very much like to PDCA, but includes the control step that ensures the change is sustained, generally by a product champion. The analysis process usually involves a group, so group learning does take place though with a focus on single-loop, and to some extent, triple-loop learning because diversity in thinking is welcomed. Once you’ve done a DMAIC, if the problem isn’t resolved or improvement is needed, you do it again, so like PDCA, DMAIC can be an iterative process. Since DMAIC has its antecedents in 6-Sigma, it draws on analytical tools such as fishbone diagrams and the five whys to locate the root cause of the problem.

It does appear at first glance that DMAIC meets the three learning requirements but a closer look reveals some gaps. As we have seen, unarticulated assumptions can result in suboptimal solutions without anyone realising this until the change has actually been implemented. There is no explicit requirement in DMAIC to engage in double-loop learning, which means DMAIC can result in solutions that are not sustained. While DMAIC does incorporate some tools for analysis, the underlying assumption that at any point there is one ‘right’ solution – the one that surfaces after the analysis. We have seen earlier that this assumption is characteristic of the mechanistic view of organisations, whereas organisations are complex, adaptive and human. So DMAIC doesn’t really meet all of the requirements we’ve identified.

It is hard to put together a concise definition of Design Thinking (DT). This is partly because it is a loose collection of principles, concepts and tools but also because each person who looks at it sees it a little differently. DT was originally intended as a move away from the conventional process of first designing a product, then building it and finally taking it to market. This approach carries the risk that if the designers do not accurately understand what the needs of the market, the customer might get something different from what they want or need. With DT the process was changed to enable iterative prototyping and testing. Customers, represented by carefully selected individuals, interact with the product or service and provide feedback, which is used to adjust the prototype. This is repeated over and over again till the focus group customers confirm the product is right for their needs. At this point the product was deemed ready for the market. The second departure from the traditional model, and also from some of the models we have looked above that involve quantitative and statistical data, is the requirement that the design team “walks in the customer’s shoes”, empathising with them, rather than just obtaining a set of data that the designers interpret as customer requirements.

DT is often described as distinguished by five characteristics: a human-centred approach, experimentation with artefacts, multidisciplinary collaboration, a holistic view of complex problems and a six-step (understand, observe, define, ideate, prototype and test) process. The framework looks promising because it is explicitly intended to address the messy problems we find in complex, embedded human systems.

Let’s see how it stacks up against our three learning requirements. First, multiple learning modes. DT has a strong focus on collaborative problem solving, acknowledging that it is impossible for a single individual to see the complete picture on their own. The process of empathising with the customer goes some (but not all) of the way to make sure that unspoken assumptions don’t influence designers and result in a gap between the product and customer needs. So DT checks most of the boxes associated with multiple forms of learning, the first requirement. Second, the process is iterative and any insights gained from one cycle are carried forward to the next with knowledge gained captured using very visual and tactile artefacts. So DT meets this requirement. Third, DT involves repeated prototyping, receipt of feedback, redesign and reflection on the part of the designers. So there is a certain degree of explanatory work, but as we see below, this is limited.

Are there any gaps between the DT approach and our learning requirements? One aspect that seems to be missing is any attempt at identifying and articulating implicit assumptions. The rationale is that the adverse effect of any of these assumptions will be addressed by the prototyping process. What also seems to be missing is any explicit questioning the goals associated with delivering the product, such as who the customer stakeholder is. So second-loop learning is not very strongly addressed. The second requirement is met because within the DT framework, there is a strong focus on artefacts and this is leveraged as part of the accumulative learning process. This reliance on artefacts is necessary because for the customer to respond to the prototype, they need to be able to interact with something tangible, if not a physical object. However, DT is focused more on the instrumental (practical) aspect of the problem or need and less on the explanatory aspect. From an organisational perspective, this might be sufficient, but it isn’t clear how DT results in knowledge that can be transferred from one situation to another. As we have seen earlier, everything we do is informed by some sort of theory, good or bad. If we are not doing something to change our theoretical frame, it is likely we will continue to respond largely as we have done in the past. It looks like DT may be falling short on the third requirement too.

Are there frameworks that do address the learning requirements in a better way? We’ll take a look next week.

If you are interested in learning more about organisational alignment, how misalignment can arise and what you can do about it join the community. Along the way, I’ll share some tools and frameworks that might help you improve alignment in your organisation